KL Divergence

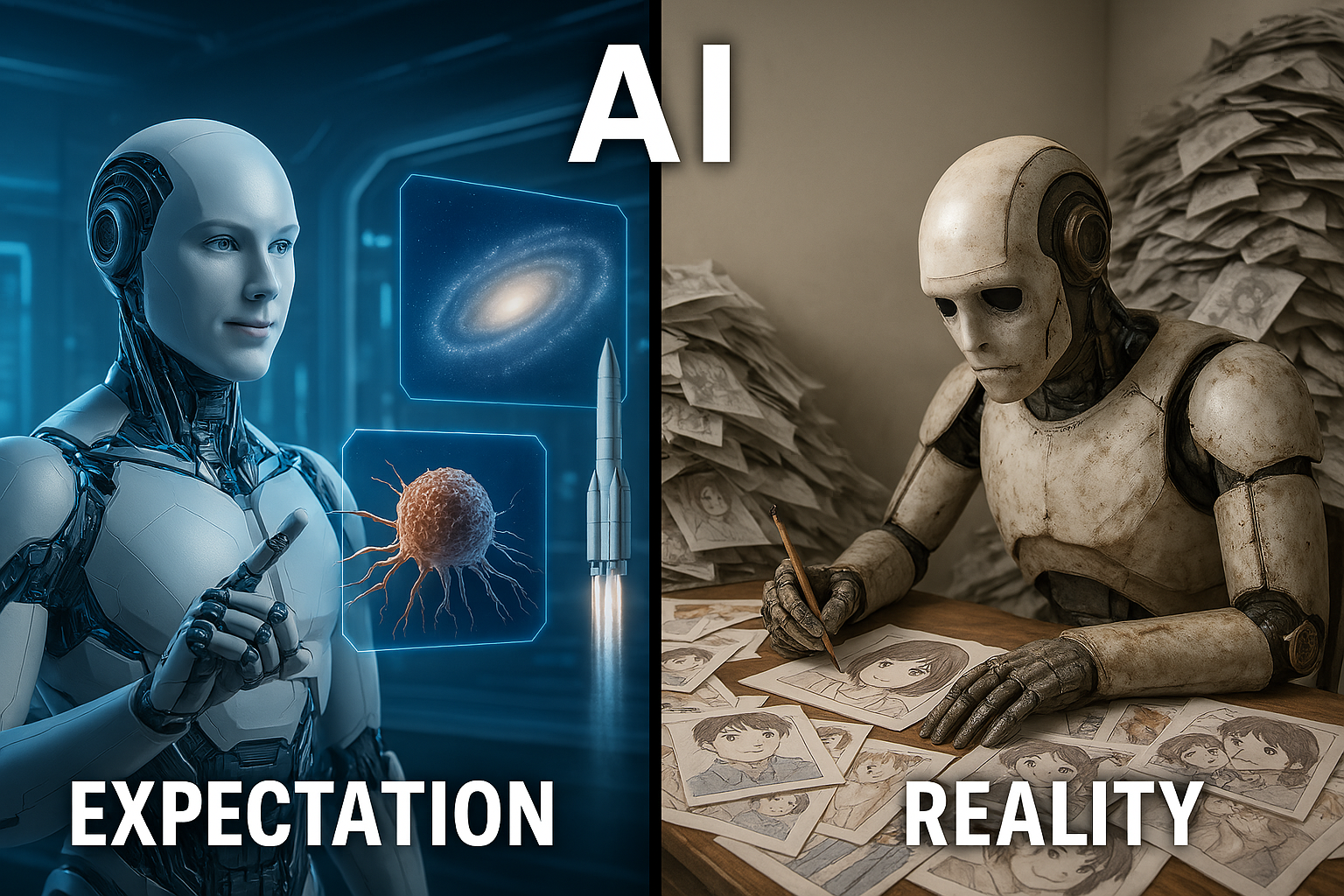

KL divergence is a measure of how expectation diverges from reality.

Kullback-Leibler divergence, often referred to as KL divergence, is a fundamental concept in machine learning that can initially seem confusing due to its somewhat intimidating name. However, understanding the intuition behind KL divergence is crucial as it plays a vital role in various areas like variational autoencoders, reinforcement learning, knowledge distillation, and many generative models. At its core, KL divergence measures how one probability distribution diverges from a second probability distribution.

KL divergence is closely related to the previously discussed concepts of entropy and cross entropy. Entropy measures the expected surprisal of event under the true distribution , cross entropy measures the expected surprisal of event with the model’s predicted distribution and weighting the estimated probabilities of the event using the true distribution . While the model’s goal is to simulate the true distribution as closely as possible, some discrepancy is almost always present. KL divergence quantifies this discrepancy, by measuring the "divergence" between these two informational values.

Understand KL divergence as MLE

As mentioned earlier, KL divergence measures the difference between two distributions. In many machine learning scenarios, our goal is to train a model whose predicted probability distribution (parameterized by ) is as close as possible to the true underlying distribution of the data. Minimizing this difference is crucial for building effective models.

Looking at the formula above, the true distribution is given, and therefore the entropy is a constant with respect to our model parameters . This makes our goal of minimizing KL divergence equivalent to finding model parameter that minimizes cross entropy:

Now, let's see why minimizing this cross entropy is equivalent to performing maximum likelihood estimation.

As previously discussed in Likelihood and Maximum Likelihood Estimation, in MLE, our goal is to find the parameter of a model distribution that best explains a set of observed data points , assuming these data points are independently and identically distributed from the true distribution . The log-likelihood of observed data with the parameter is:

In practice, we often don't know the true distribution . However, given a set of observed data points, we can consider the empirical distribution of , which assigns a probability of to each observed data point. The cross entropy between this empirical distribution and the model distribution is:

This shows that minimizing the cross-entropy between the empirical distribution of our observed data and our model's distribution is equivalent to maximizing the average log-likelihood of the data under the model. Therefore, training a model by minimizing the KL divergence between the empirical data distribution and the model distribution is fundamentally the same as performing maximum likelihood estimation.

Reference

Kullback-Leibler divergence wikipedia

- ← Previous

Cross Entropy - Next →

Autoencoder